A Harvard-led study has shown that an artificial intelligence model can diagnose emergency room patients more accurately than human doctors at critical first encounters. The findings, published this week in the journal Science, highlight AI’s potential to support overwhelmed A&E departments, though experts call for rigorous real-world testing before widespread use.

Researchers from Harvard Medical School and Beth Israel Deaconess Medical Centre tested OpenAI’s o1 and 4o models against clinicians using records from 76 real A&E cases. Two senior internal medicine consultants made initial diagnoses, which independent peers evaluated alongside the AI outputs without knowing their origins.

The o1 model shone brightest. “At each diagnostic touchpoint, o1 either performed nominally better than or on par with the two attending physicians and 4o,” the study found, with its lead most evident in initial triage when patient details are scarcest and decisions most urgent. From unaltered electronic records alone, o1 matched or neared the correct diagnosis in 67 per cent of triage cases, beating one doctor’s 55 per cent and another’s 50 per cent.

“We tested the AI model against virtually every benchmark, and it eclipsed both prior models and our physician baselines,” said Arjun Manrai, who leads Harvard’s AI lab and co-authored the paper.

The trial fed AI only text-based data, and the authors noted limitations with images or scans. They stressed an urgent need for prospective trials in live settings. Beth Israel clinician Adam Rodman, another lead author, pointed to gaps in oversight.

“There’s no formal framework right now for accountability around AI diagnoses,” he told the Guardian, adding that patients want humans to guide them through life-or-death decisions and challenging treatments.

Critics urge context. Emergency doctor Kristen Panthagani described the work as “an interesting AI study that has led to some very overhyped headlines,” criticising its use of internal medicine specialists over A&E experts.

“If we’re going to compare AI tools to physicians’ clinical ability, we should start by comparing to physicians who actually practice that specialty,” she said. Her focus in first visits? Not pinpointing the final illness, but spotting killers.

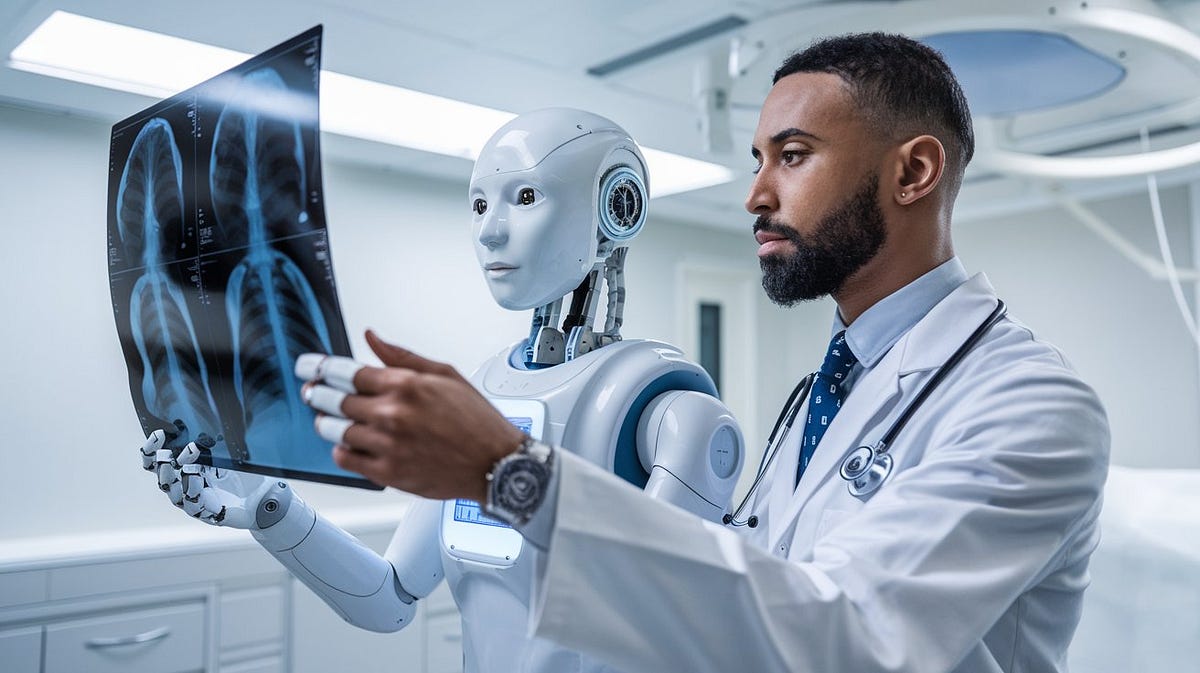

In the UK, where NHS waiting lists topped 7.6 million last month, the results spark interest amid MHRA plans for AI device approvals by 2027. Yet as Manrai emphasised to Harvard outlets, the goal is augmentation, not replacement.